Supercharging AI Video and AI Inference Performance with NVIDIA L4 GPUs

Por um escritor misterioso

Descrição

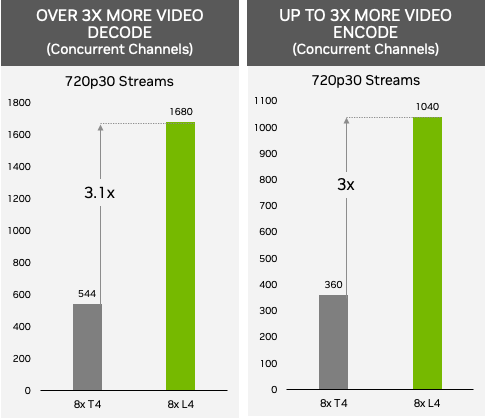

NVIDIA T4 was introduced 4 years ago as a universal GPU for use in mainstream servers. T4 GPUs achieved widespread adoption and are now the highest-volume NVIDIA data center GPU. T4 GPUs were deployed…

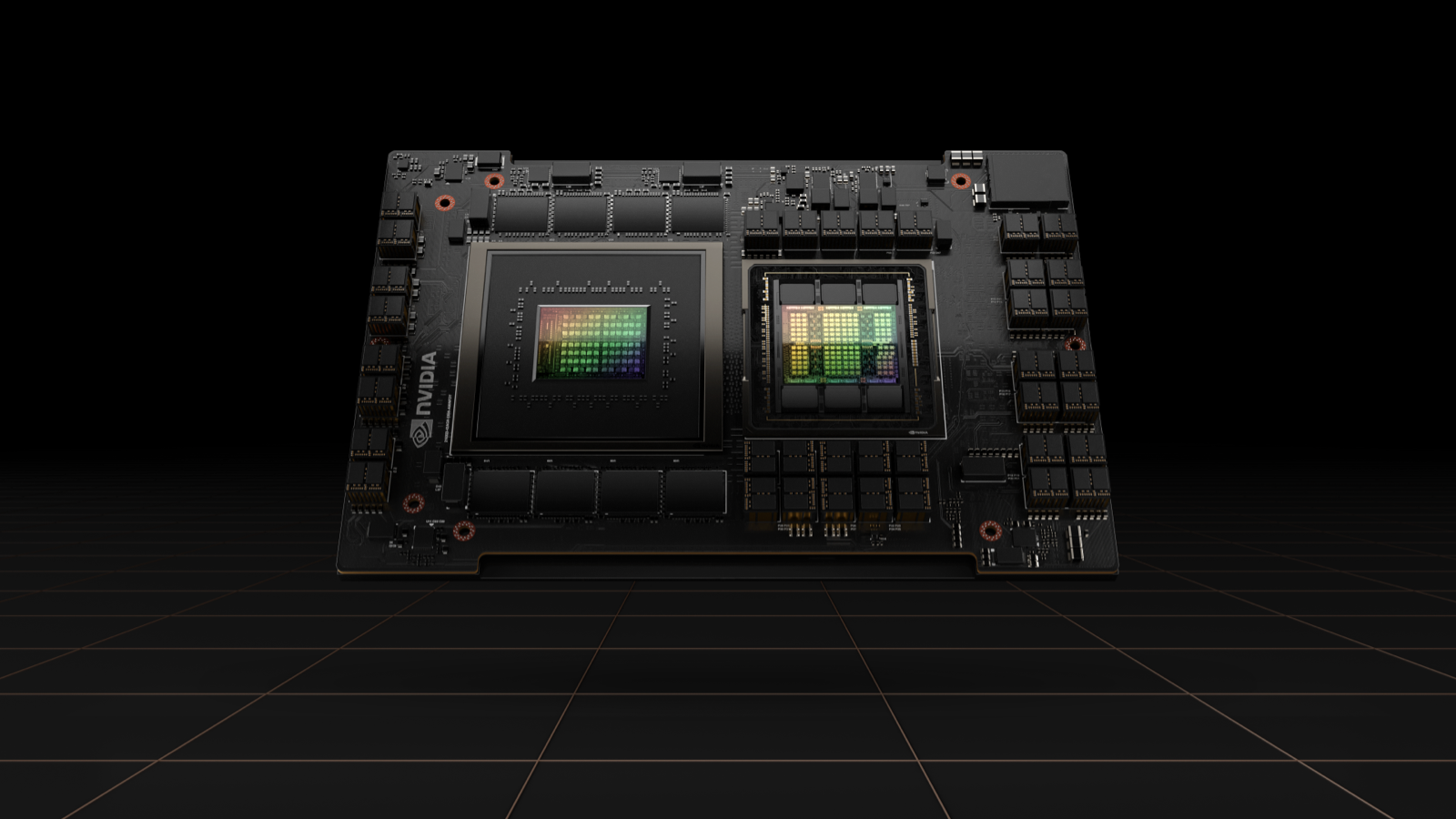

NVIDIA Hopper H100 & L4 Ada GPUs Achieve Record-Breaking

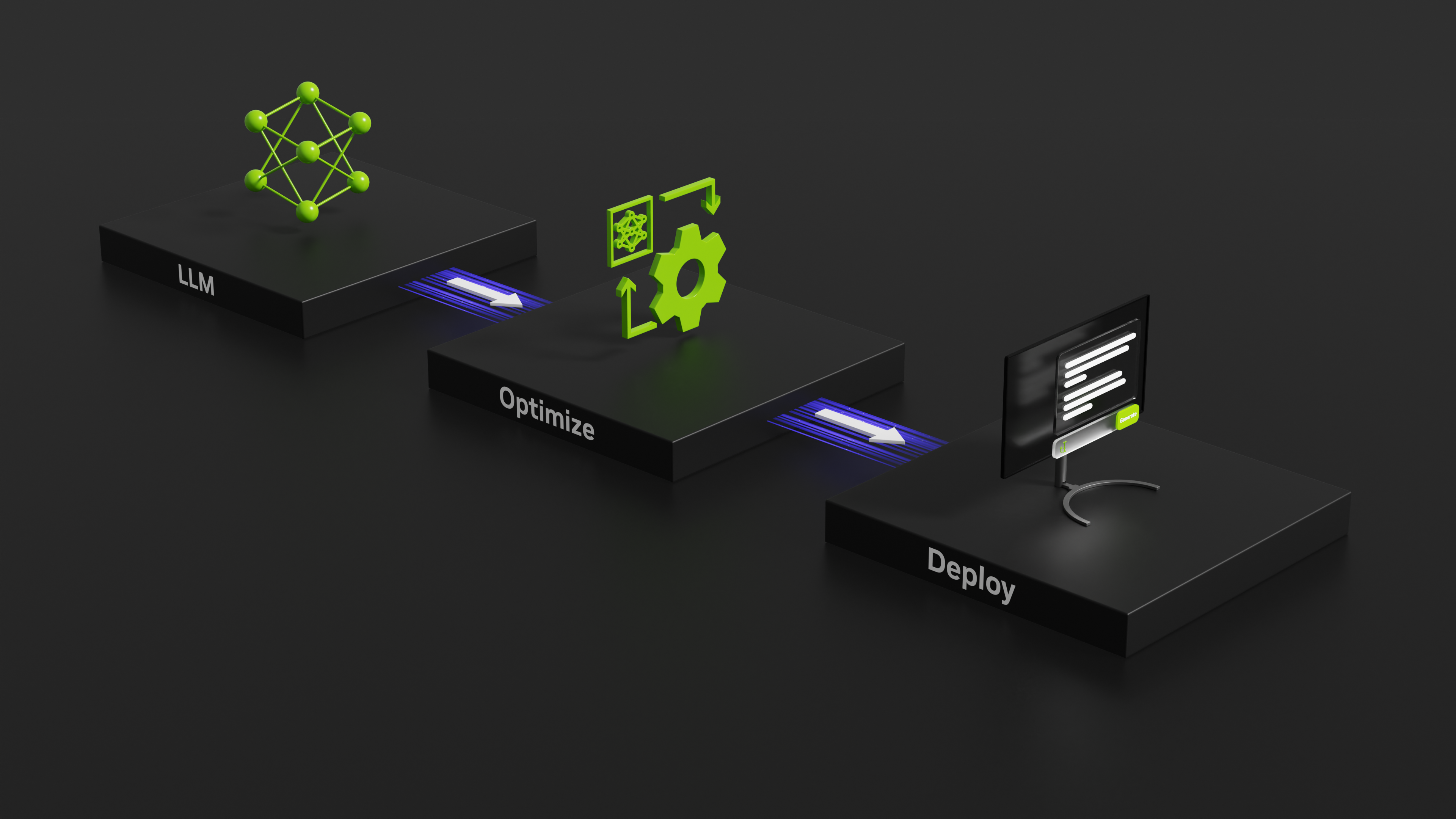

NVIDIA TensorRT-LLM Supercharges Large Language Model Inference on

Supercharging AI Video and AI Inference Performance with NVIDIA L4

Nvidia Unveils GPUs for Generative Inference Workloads like ChatGPT

Supercharging AI Video and AI Inference Performance with NVIDIA L4

L40S GPU for AI and Graphics Performance

Choosing a Server for Deep Learning Inference

NVIDIA GTC 2023 Recap - DGX H100, Grace Superchip, H100 NVL, L40

H100 Tensor Core GPU

NVIDIA Launches Inference Platforms for Large Language Models and

Ken He on LinkedIn: NVIDIA Takes Inference to New Heights Across