PDF) Incorporating representation learning and multihead attention

Por um escritor misterioso

Descrição

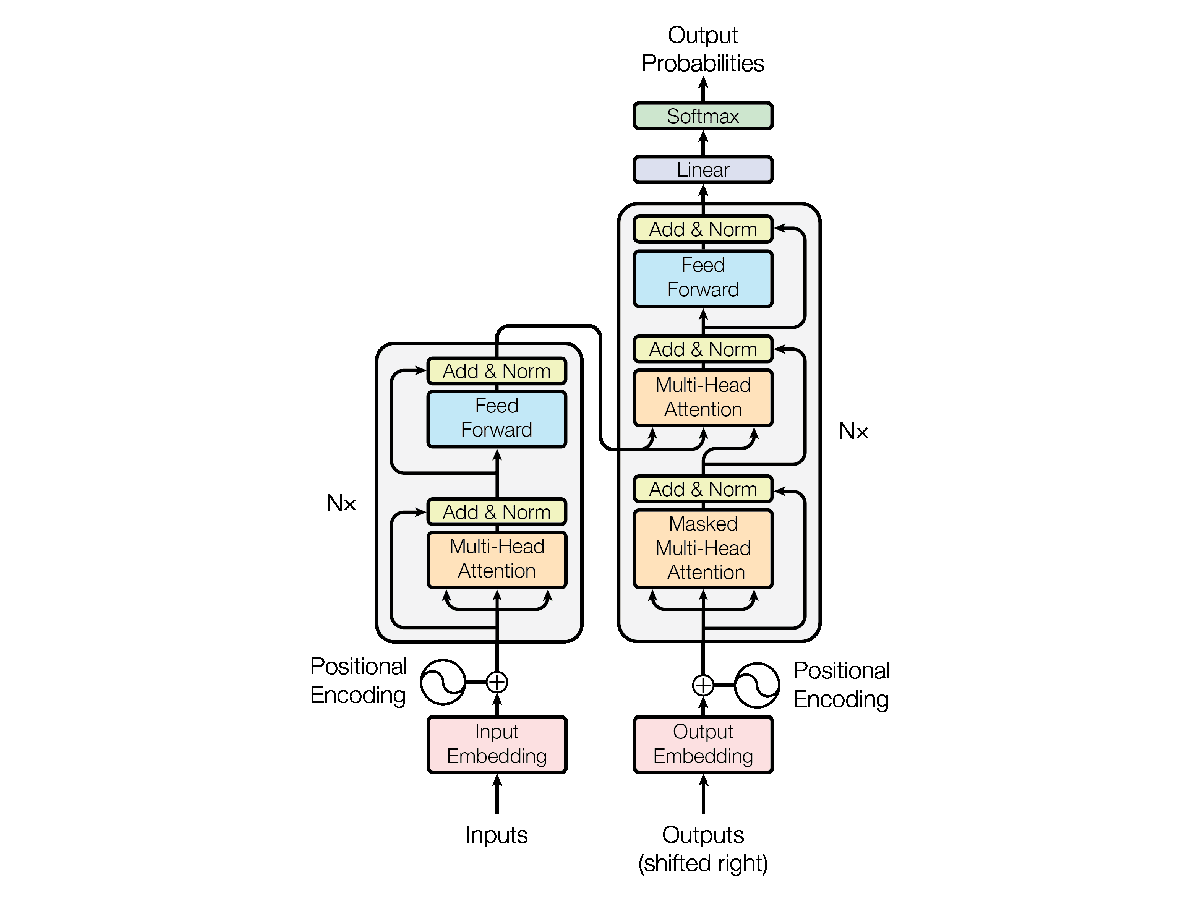

Understanding the Transformer Model: A Breakdown of “Attention is All You Need”, by Srikari Rallabandi, MLearning.ai

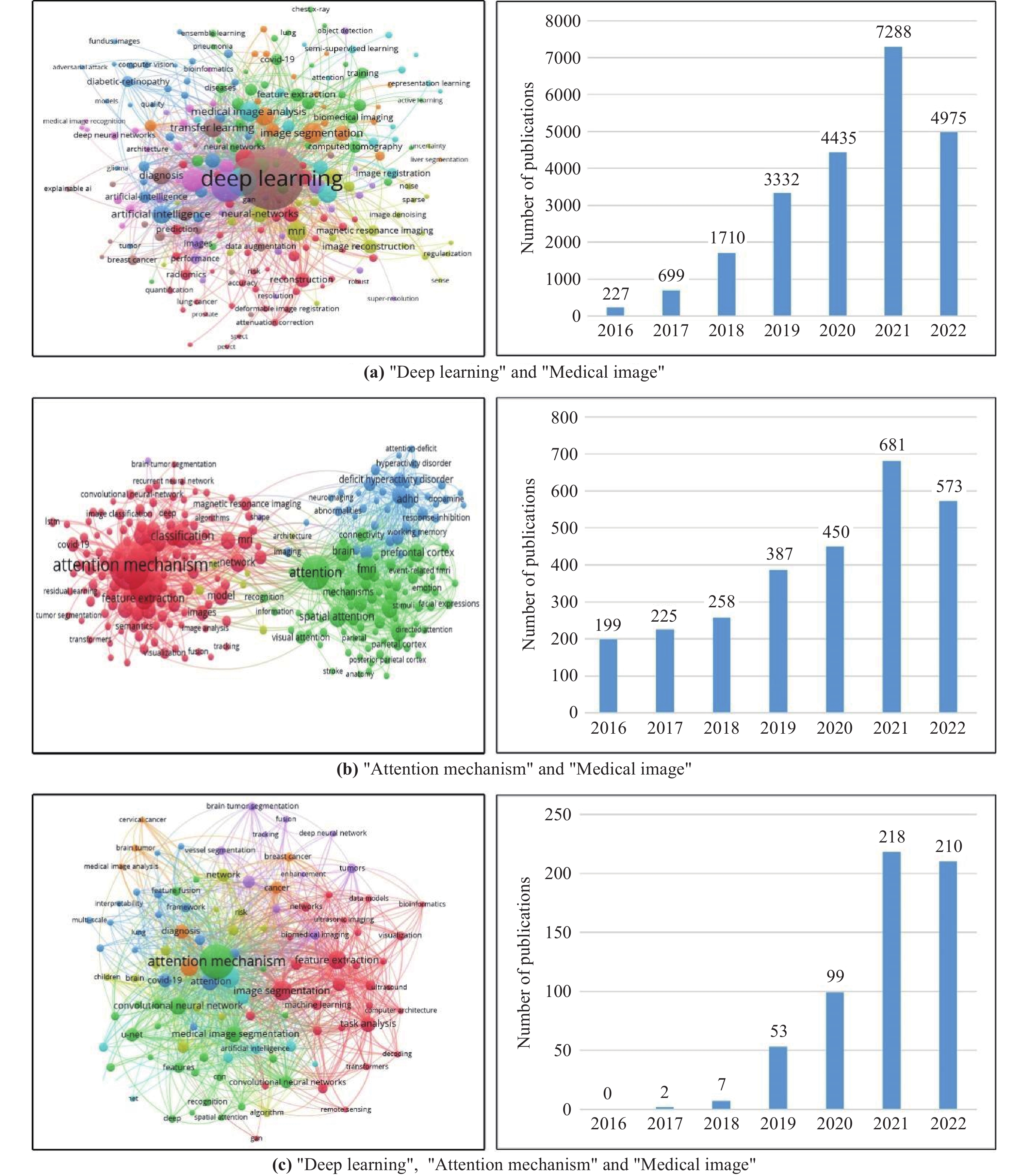

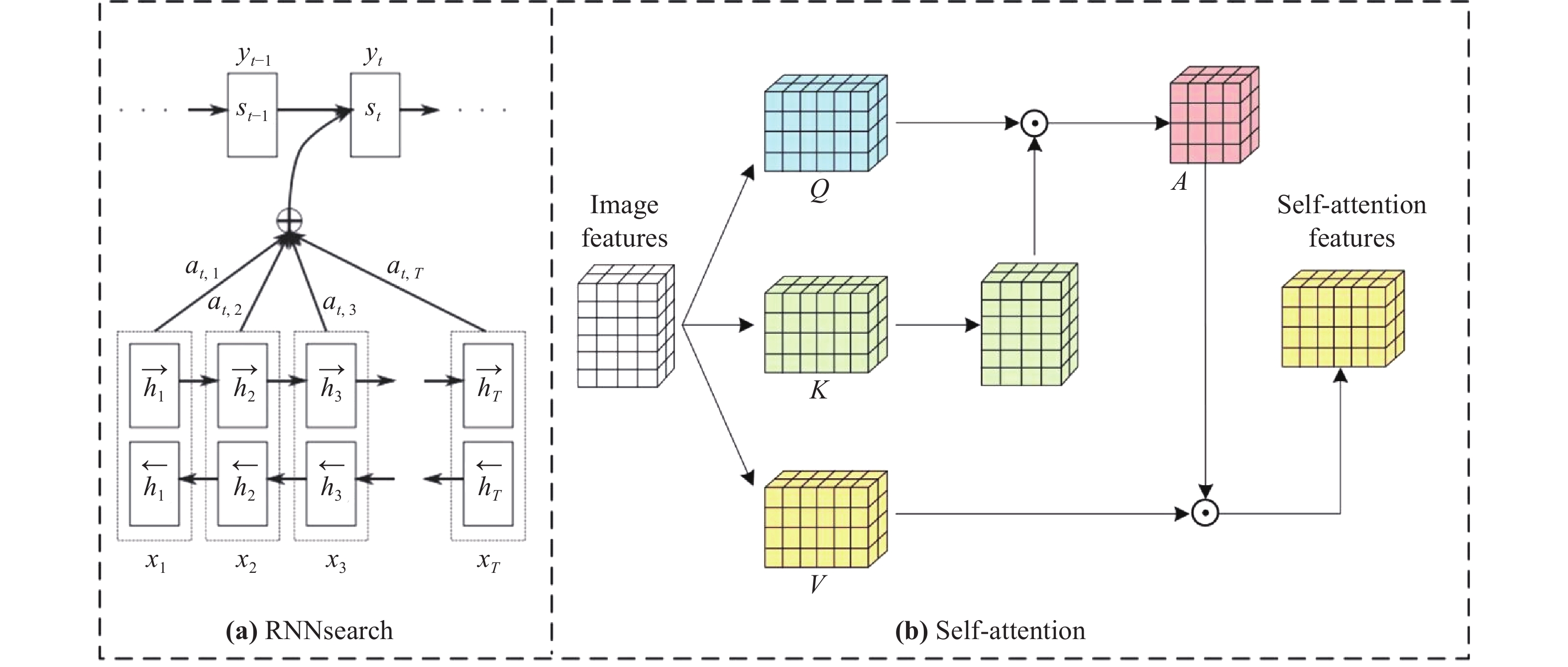

Deep Learning Attention Mechanism in Medical Image Analysis: Basics and Beyonds-Scilight

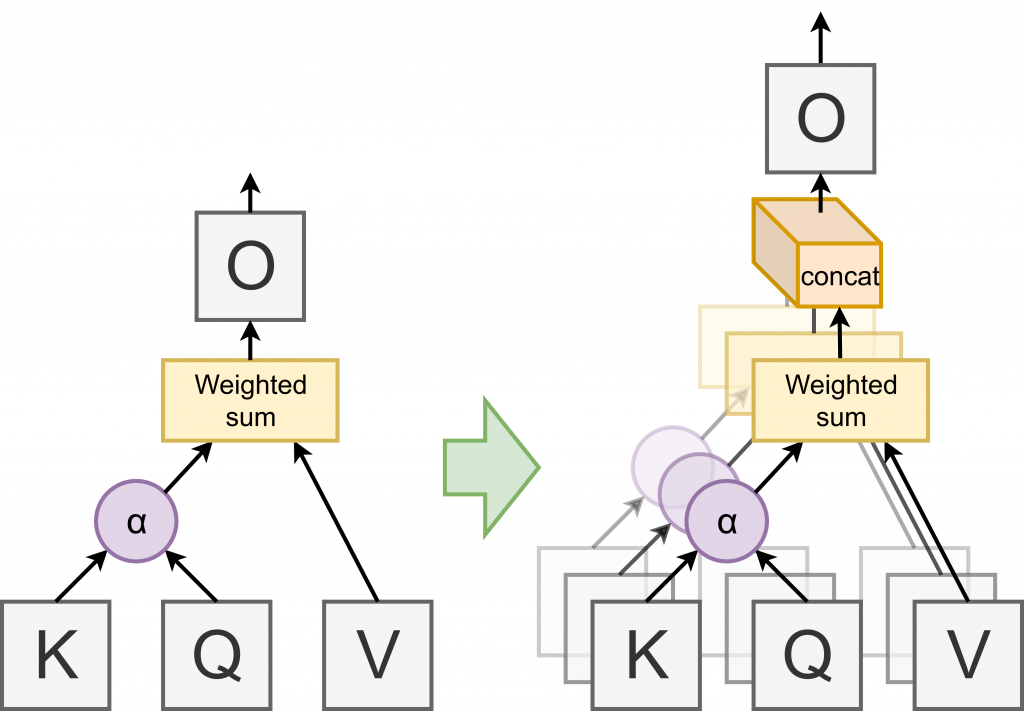

Are Sixteen Heads Really Better than One? – Machine Learning Blog, ML@CMU

Transformer (machine learning model) - Wikipedia

Entropy, Free Full-Text

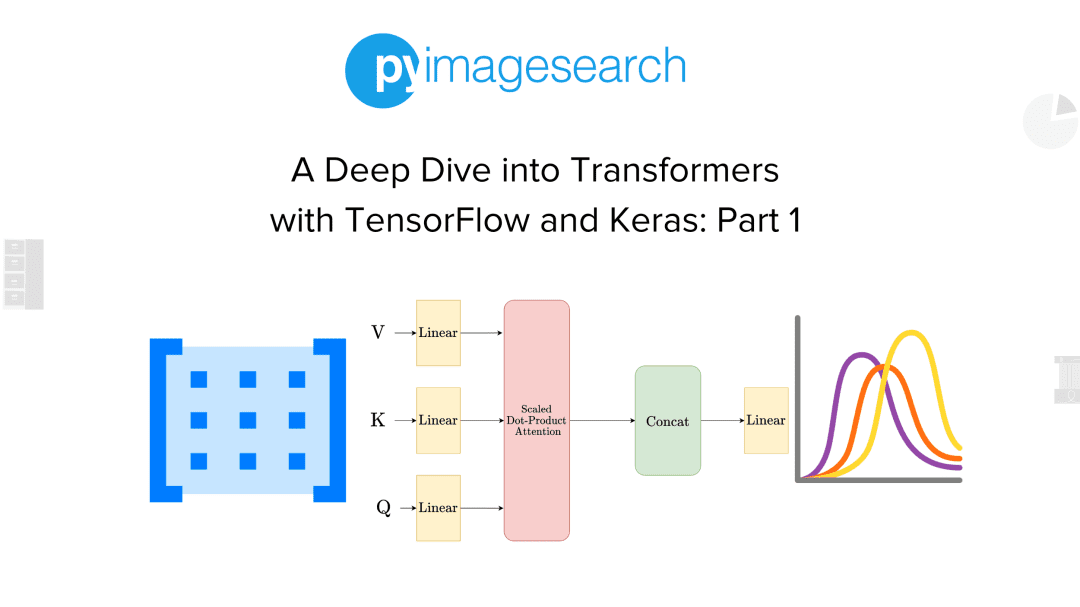

A Deep Dive into Transformers with TensorFlow and Keras: Part 1 - PyImageSearch

Deep Learning Attention Mechanism in Medical Image Analysis: Basics and Beyonds-Scilight

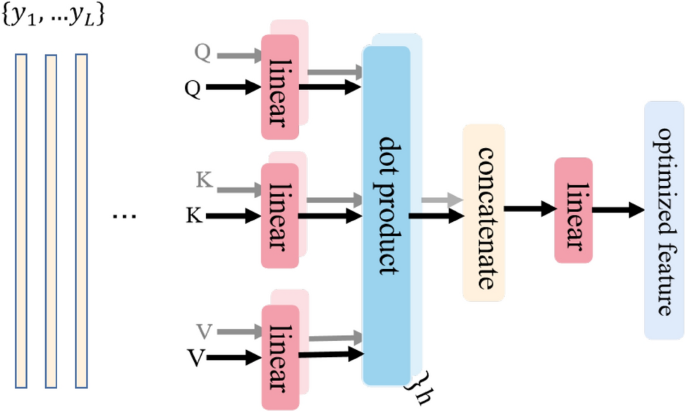

Multi-head attention-based two-stream EfficientNet for action recognition

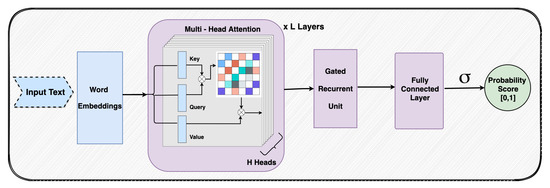

PDF] Interpretable Multi-Head Self-Attention Architecture for Sarcasm Detection in Social Media